Hardware-Software Codesign and Autonomous Vehicles

The benefits of designing the parts of a system together

Let’s continue along the big theme of design of AI systems and which aspects of the pre-AI world we should expect to mimic and which we should handle differently. As in the last post, I’ll introduce some reasoning that’s pretty familiar to the creators of computer systems, in this case mixing technical and social considerations. Indeed, an important part of delivering tech products effectively is not just writing good code but also understanding requirements and pushing back on them with key stakeholders. I’m going to try to make a related case for a bigger-picture perspective on how we should be reconfiguring our world to take advantage of AI.

Hardware-Software Codesign

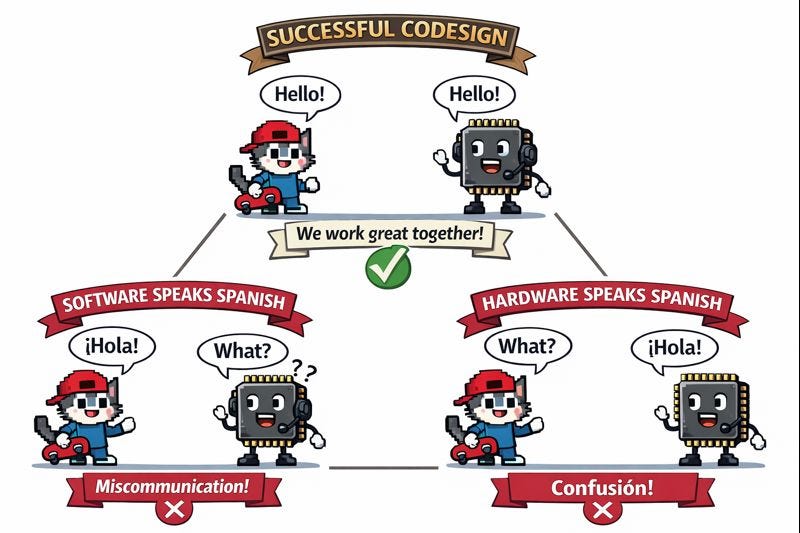

We’re probably all familiar with the distinction between computer hardware and software. Hardware is physical stuff whose design changes relatively infrequently, and software is the rapidly changing instructions that get loaded into the hardware. In the case of programmable hardware like a CPU or GPU, the key service each generation exposes is running programs in a particular machine language. If you want to use the latest-and-greatest software machine language, but all you have is a piece of programmable hardware several generations behind, you’re out-of-luck. We can get an intuition by analogy to humans speaking natural languages. Any party who “defects” from a common language ruins everything, but if both parties changed languages at once, we’d be fine.

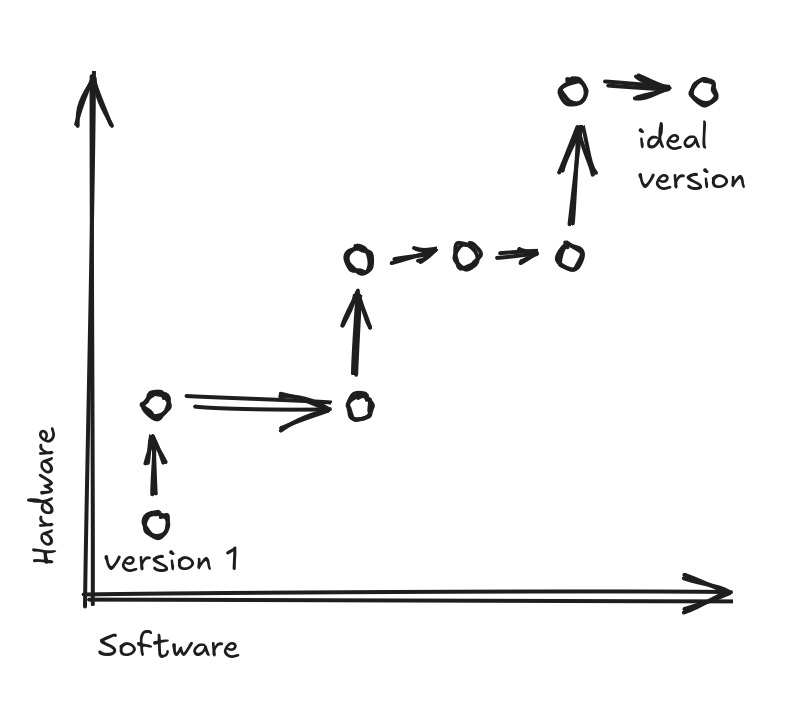

Consider the social processes that lead hardware and software to evolve over time. Often hardware and software artifacts are produced by different teams, whether we are talking about commercial products or open source. We’ve probably all experienced working on teams with relatively narrow purposes. Incentives are set around succeeding within the natural boundaries of a project. The folks improving a software package are excited to see users embrace new features, and if those features depend on new hardware functionality that is imaginary for the time being, there won’t be as many happy users next month, which might mean that the startup building the software package fails at its next fundraising round. Similarly, the engineers behind the hardware can only be sure they have done a good job by running many software programs, and when said engineers add a new feature to hardware, it’s a real obstacle not to have an existing base of software using the feature, to use as test cases. As a result, the most typical mode of evolution within a hardware-software ecosystem is repeated modest improvement by one team at a time, each time in some way that doesn’t require matching work by the other. In the world of CPUs, the manifestation of this problem has been hardware designers overindexing on SPEC benchmarks, standardized programs that everyone is supposed to be able to run quickly, at the same time as programming-language designers may want to innovate with fundamentally new features but find themselves ignored by hardware designers who can advance their careers better by speeding up the same old programs.

We can see that it takes awfully many steps to make it to the ideal point at the top right of the (highly stylized!) graph. The two axes represent vague senses of improving quality, respectively for hardware and software. They mostly need to be improved independently, without much help by the other. However, sometimes one side “accidentally” introduces a new feature that turns out to be crucial for enabling innovation on the other side. With enough of these accidents accumulating over time, we might be lucky enough to converge to the ideal overall design.

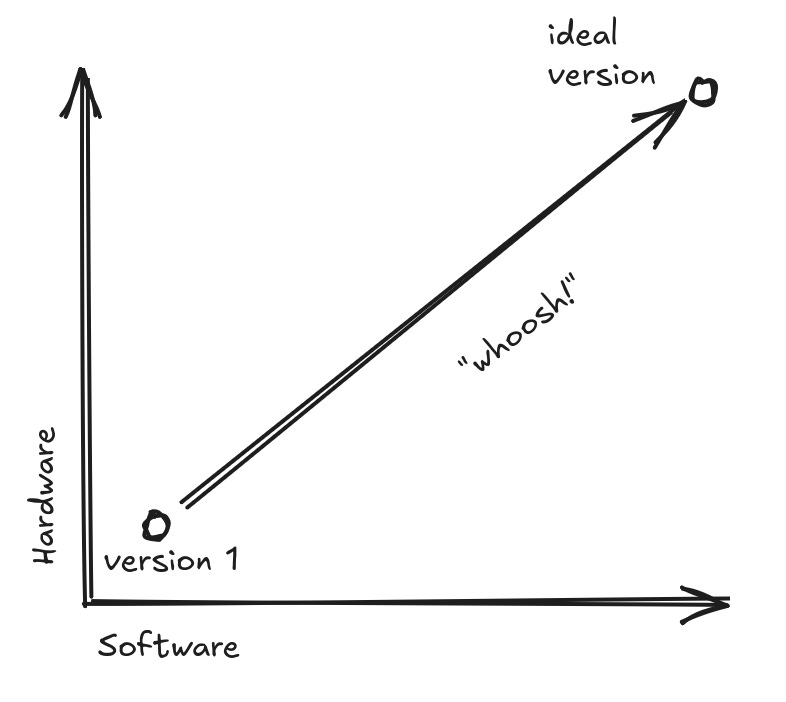

Imagine that somehow there is enough breathing room for the hardware and software engineers to lock themselves in a conference room for a month. Their goal is to design the whole ecosystem holistically. For any great feature idea, it should no longer be a valid excuse that it would require too many changes from other parts of the system. The team does a tasteful job of what we call in the biz hardware-software codesign.

Continuing our loose interpretation of these graphs, the one long arrow may take significantly longer to deliver anything at all, compared to any of the much-shorter arrows in the previous graph. However, when we check in after the time needed for several steps of the more-incremental method, we may find that we have arrived at a unified system that works much better for everyone.

The current AI revolution actually depends on relatively big leaps of this kind of codesign. Nvidia is the market leader in GPUs, which power the rise of deep learning. There’s no doubt that Nvidia is excellent at creating hardware for running this kind of workload. However, it is commonly believed that the real central advantage that helped Nvidia dominate the market is their CUDA programming framework. CUDA programs are usually embedded inside older programming languages like C++, so there’s still a flavor of incrementality to it. However, it introduced a more-pleasant style of parallel programming, where many things are happening at-once in a software program; and CUDA is only compatible with Nvidia hardware, motivating programmers to buy only from Nvidia if they’re fans of CUDA.

With CUDA and GPUs being developed at the same company (Nvidia), it was possible to coordinate the teams to do codesign. Developing CUDA would have been hard without cooperation from GPU engineers to add features that compiled CUDA code wants to invoke. Dually, it’s all-well-and-good to hand a programmer a shiny new GPU and say it has the most innovative features; if the programmer can’t figure out how to write code to use those features, there is no pay-off, and the CUDA tools can automate generating the needed machine code, saving the programmer from learning grungy new details.

The overall point is that we should fight our tunnel vision about what makes for a good design of a particular artifact, sometimes stepping back to think about the big picture and invest in slower revisions that modify multiple major pieces simultaneously.

Autonomous Vehicles

One of the first 21st-century AI applications with significant “wow factor” was autonomous vehicles, starting with the DARPA Grand Challenge and working our way up to widespread deployment today of Waymo cars that you can hail with a mobile app. I myself am cheering for those cars to come to my city, but I’d like to suggest potential next steps that would be even more exciting, and I’m going to use the metaphor of codesign to make my case.

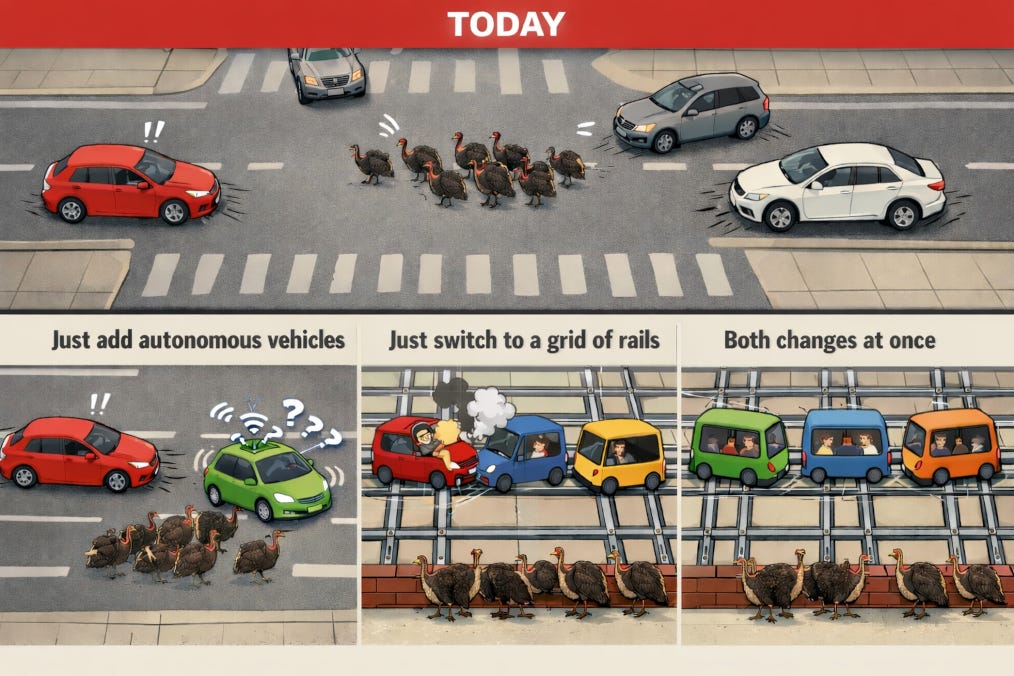

Roads can be complicated environments to navigate safely. Even if an autonomous car handles 99% of scenarios safely, terrible accidents in the remaining 1% will doom adoption. For concreteness, I’ll take as my example of a rare but safety-relevant scenario encountering a gang of turkeys crossing the road, which every autonomous car needs to plan for in Metro Boston. (Some readers are going to assume I’m joking, but, no, I am describing our safari-like atmosphere faithfully.) It seems that we have a wickedly hard AI challenge to train software that knows how to deal safely with all of these scenarios that human drivers take for granted.

Today’s orthodoxy says, challenge accepted! We’ll continuously performance-optimize our AI hardware and software, so that it becomes able to execute more-and-more-intricate logic to deal with driving scenarios. Even more importantly (and centrally to the mainstream view), we’ll keep collecting more and more training data, used to produce the next-generation smarter software. The autonomous car will make mistakes, but every mistake feeds into avoiding itself in the next generation.

Waymo and its competitors have already shown that this approach works quite well, though at rather high engineering cost. When we read about tech companies building data centers at a breakneck pace, it’s to build out compute power to support this kind of training. All else being equal, it would be better if we didn’t need to crunch reams of driving data to train better autonomy software.

I should also be clear that I’m about to engage in some brainstorming where we don’t get into details of how societies make decisions about infrastructure. That’s the spirit of codesign, imagining opportunities from coordinated changes, but merely invoking codesign doesn’t help us with the politics of getting everyone on-board. The engineers behind autonomous cars are doing a great job coping with the aspects of transportation infrastructure that seem hard to modify today. We should just be ready to seize opportunities looking forward.

I’m arguing that our problem is assuming that the driving environment remains the same, and we just start injecting increasingly many autonomous cars. What if we dramatically simplified, in some ways, the environment that vehicles traverse, at the same time as making it more-complex in some ways that would challenge human drivers? Specifically, what if we switched to a grid of train-style rails, physically separated from space where humans (and turkeys) have free reign to roam? Dropping autonomous cars into today’s driving environment is challenging, and dropping human drivers into our hypothetical rail network is risky, but we can go at this problem codesign-style and make both moves simultaneously. (This approach was first suggested in the 1950s under the heading of personal rapid transit, and while it failed to catch on, it seems worth considering whether technology improvements in the mean time change the balance of feasibility.)

Of course, there is plenty of prior art for creating simplified environments for vehicles. A subway network is a good example, with physical safeguards to keep people from running into the tunnels. The even older above-ground train networks are a less-extreme example, where it’s trivially easy for pedestrians or motorists to stray onto the tracks. Parts of our current road network could even be turned into a system in that spirit, where, say, it’s illegal for humans to walk in them. The important advantage of this style is that an autonomous vehicle only needs to notice that something abnormal is going on so that it can initiate a fail-safe mode like coming to a safe stop (at which point the police swoop in, restore expected conditions, and fine anyone responsible for the disruption). The vehicle does not need to understand the subtleties of different kinds of abnormal scenarios (e.g. turkeys vs. Cthulhu rising). I should mention here that deployed autonomous-vehicle software already tries to identify weird situations and trigger fail-safe behavior (do you remember the recent San Francisco power outage and how it scared many Waymo cars into phoning home to get permission from humans to proceed?), but the point is that the detection problem should become much simpler in purpose-built environments.

We can even design the environment and standards for the vehicles themselves to simplify the vision problem as much as possible. We want to minimize the computational complexity of both monitoring other vehicles and detecting that something is amiss. For instance, vehicles could be held to close standards of color and shape, especially avoiding complex shapes.

And, as I mentioned established public-transit systems above, I should emphasize that this scenario could support much greater convenience than we’re used to from public transit. Assume all of the autonomous vehicles are required to coordinate over a network and perhaps even participate in a common routing protocol. Then we don’t need to work with any analogue of a small, fixed set of subway lines. The vehicles on the rails can plot appropriate paths for their riders while coordinating to avoid collisions. We avoid frustrating inefficiencies like a line of cars stopped at a traffic light, very slowly getting moving again when the light changes, since each driver has a reaction-time delay from noticing that the car immediately ahead has moved forward far-enough. With central coordination in a similar scenario, all the autonomous vehicles could begin moving at-once.

The central benefit of rails for us is that they reduce degrees of freedom, allowing simpler modeling by vision and control systems. Perhaps we don’t need vision processing by the vehicles at all, and we just rely on sensors on the rails plus stationary smart cameras that watch out for weird situations. (By the way, there’s a nice bonus that I learned from Sustainable Energy – Without the Hot Air, by the late Prof. David J. C. MacKay: that travel on rails is fundamentally more energy-efficient than on roads, because typical car tires on roads face about five times as much rolling resistance as trains running steel on steel.) However, we could still realize similar benefits just by enclosing roads as we know them today, so that they become (1) more-predictable environments with (2) relatively easy tests for when something quite-unusual has happened. There would remain a cybersecurity element of adversaries who try to find ways to perturb such environments dangerously without being detected, but that risk isn’t obviously greater than for autonomous cars on today’s roads.

Takeaway

This example was meant to illustrate encountering a system with different parts, focusing in on one part and its control problem, noticing that it represents a wickedly hard problem for AI. On the one hand, we can celebrate the chance to challenge ourselves and build increasingly better AI, better able to tackle those wicked problems. On the other hand, putting on our codesign hats, we can imagine system-level changes that remove the need to solve those problems, replacing them with easier ones. The easier problems will not just be easier for us to design solutions to. They should also lead to physical equipment that costs less and uses less energy.

In this post, the substrate we started out taking for granted is unenclosed roads. I’ll discuss in the next post another substrate that we take for granted at an even-more-fundamental level, and it will also be one that’s more central to the recent explosion of progress on generative AI.

Thanks for writing this, it clarifies a lot, and I appreciate the emphasis on social considerations in hardware-software codesign, which often involves a crucial human-centric feedback loop to effectively intregrate AI systems into diverse real-world contexts.