Cryptography for Delegation in Search

First musings on differences in "hiring" AI to develop better ideas

The last two posts introduced the idea of our societies as distributed systems and cast one aspect of our psychology as an optimization to speed up evolutionary search, by periodically generating hard-to-fake evidence of how qualified certain individuals are in important skills, even if those skills will only come into play much later. Then evolutionary search can focus its attention on the individuals already showing promising signs.

Let’s now imagine search processes where most of the work is done by AI. It’s helpful to be inspired by natural systems, but the scale will be different-enough that we should expect new challenges and opportunities. For instance, there may be much more total “thought” happening at once, and individual decisions may be made more-quickly.

To be slightly more concrete, let me focus on search for technological advancement. I’ll consider as in-scope, for example, both scientific progress in understanding the natural world and development of a software product for a company. Examples like creating more-compelling art will be out-of-scope. The idea is that this broad domain aims for useful solutions where it is relatively clear how to evaluate final, applied solutions objectively.

We’re used to a complex human system that searches within this space. It involves incentive-compatible tricks like tenure and patents. A largely automated alternative probably works very differently. This post and the next will introduce ingredients from computer science that may come to play large roles, even though they aren’t used similarly in innovation ecosystems today. Then the following two posts will sketch how to apply these tools to specific goals from AI safety and science fiction.

One of the questions we will work our way up to answering is, what are some fundamentally different possibilities when we “hire” AI agents rather than humans to help find new technical solutions, especially when we are very concerned with the accuracy of their conclusions?

Basics of Cryptography

Cryptography is a broad discipline that is surprisingly hard to describe completely in one pithy phrase. I’m going to settle for describing it as supporting secure use of untrusted intermediaries. The most-traditional variation is encryption, which supports one party sending a secret message to another. The intermediary could be a computer network or a human messenger carrying an envelope that, if opened, seems to contain gibberish, to a reader who doesn’t know the secret key.

However, digital signatures are another important family of cryptographic operation. They make it possible to confirm who wrote a message, since that party signs the message in a way that is hard to forge. With public-key cryptography, the signer’s private key (not shared with anyone else) has a corresponding public key that can be publicized widely, and then anyone who has the public key can check efficiently that a message was signed with the private key.

Let’s review a widely deployed instance of these tools that will be instructive in inspiring distributed AI search for technological innovations. The example I’ve chosen is trusted execution environments (TEEs), which were developed to address concerns that today’s computer systems contain awfully many different parts, written by different engineers, and we should worry that a bug in any important part can lead the system overall to exhibit unsafe or insecure behavior.

We’ll take the example of mobile phones implementing electronic-payment systems, say where you tap your phone screen against a reader at store check-out in the physical world. We don’t want the games installed on your phone to be able to subvert the payment process, but let’s not cap our paranoia there. What about bugs in the operating system? What about bugs in the CPU that runs the operating system, games, and payment software? Can we find a way to trust as little of the hardware and software as possible?

Each TEE includes a minimized trusted core, able to hold the line against fairly arbitrary bad behavior by the rest of the system. It may help to think of the TEE as a fortified safe room inside a larger computer. Such a secure enclave (sounds like something out of postapocalyptic science fiction, right?) would include a few canonical elements.

Private storage for secrets like cryptographic keys

Private compute, like a CPU that lives inside the enclave and is the only compute engine authorized to access the private storage

Carefully controlled communication channels with the rest of the system, ideally looking more like a network protocol than the traditional tight integration between e.g. different CPUs

An attestation engine that I’ll get to describing in a moment

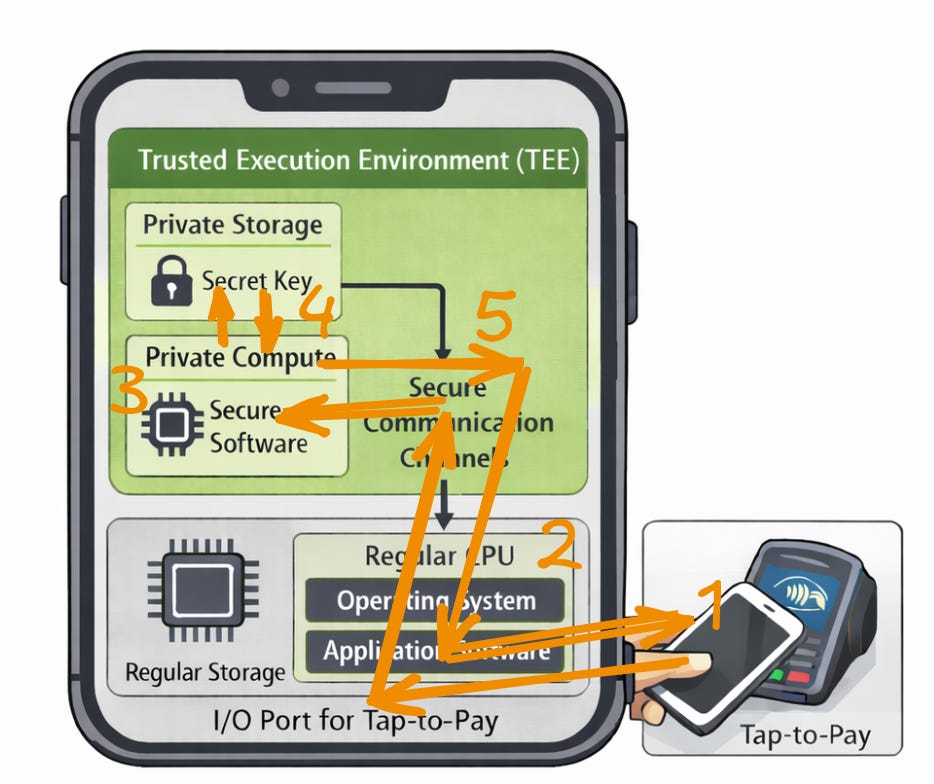

So how does the workflow go to make a simple tap payment with the phone? Here’s a diagram.

The user taps the phone against the payment terminal, which somehow transmits a signal for a particular payment amount, maybe even with an electronic description of what the user is buying.

A normal mobile app analyses that request and forwards it to the secure enclave, internally within the phone.

Software in the enclave confirms that the request is well-formed and otherwise legitimate.

The same enclave software then retrieves a secret key from private storage and uses it to sign the payment request, indicating the user’s approval, ideally after using some secured path to the display and sensors to check that the user really means to pay, without a chance for operating-system bugs to accept such approval spuriously.

The enclave conveys the signed request back to the main phone, which goes on to send it back to the payment terminal.

The cool benefit of cryptography is that the TEE can hand off a signed payment request to the untrusted remainder of the phone, which might misbehave in ways including deleting the request or sending it to the wrong place. However, the rest of the phone does not have access to the secret key, so the consequences of its misbehavior are limited. Only the TEE can produce convincing signatures, which can then be used in arbitrary ways.

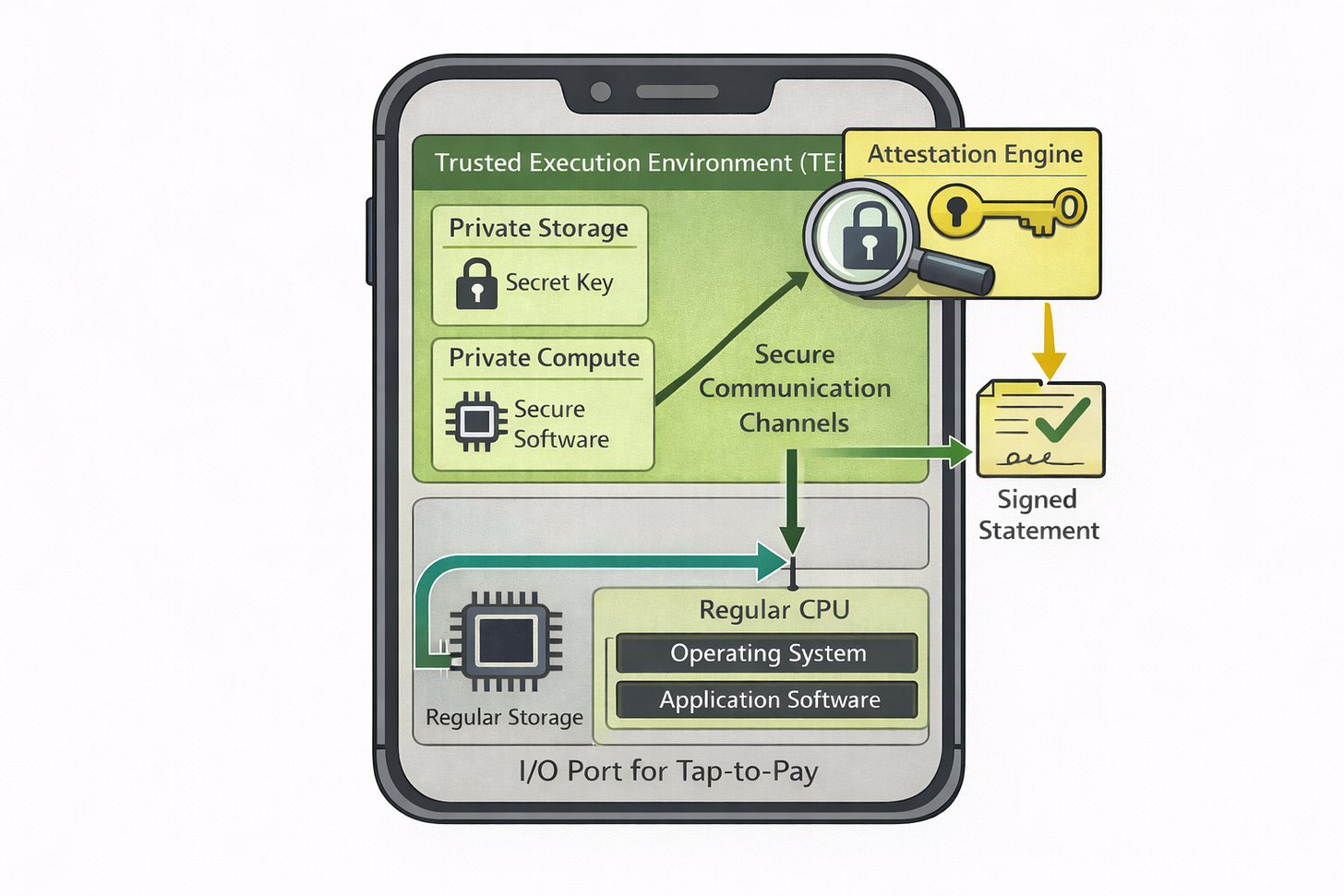

There is a still a problem with this workflow: how do we know the right software is loaded into the TEE? Ordinarily we trust the operating system to manage which software is loaded, but here we are trying to avoid such trust. The canonical solution is attestation. The TEE includes a further component whose job it is to control what software has been loaded and to reassure the rest of the world of that fact.

In our cartoon model, applications can ask the attestation engine to do its thing: examining the state of the secure elements, summarizing them (canonically by hashing all of the code) in a certificate, and cryptographically signing that certificate. Through the magic of digital signatures, a certificate can establish exactly which software is loaded, even if the certificate is much shorter than the software code. The less-trusted part of the phone can, for instance, pass that certificate off to a remote user who is worried about proper behavior of the TEE.

It is worth remarking on where the trust story bottoms out for TEEs that do attestation. It could still be the case that the attestation engine or the secure processor themselves contain bugs. However, the conventional approach accepts that risk but attaches it to the reputations of hardware manufacturers. We trust that the manufacturer is competent enough to implement and manufacture those boxes properly, protecting secret keys. That way, when anyone in the world receives a certificated signed with those keys, there is a chain of trust back to the manufacturer, and we can believe that proper inspection of TEE state was done to produce the certificate – regardless of which intermediaries help convey certificates to those who want to check them.

This lightning overview of TEEs gives us the essential ingredients for seeing how cryptography can streamline future systems where AIs and humans collaborate searching spaces of technical ideas. The powerful idea of TEEs that we will carry over is expensive checks performed rarely, whose results can be verified cheaply everywhere.

Certifying Ideas and their Sources

Let’s stop to consider some mechanisms we use today to collaborate globally on developing and evaluating better technical ideas, finding connections to the kind of trust flow we just analyzed with TEEs. From that base, we can imagine how we could go further in future systems leaning heavily into AI.

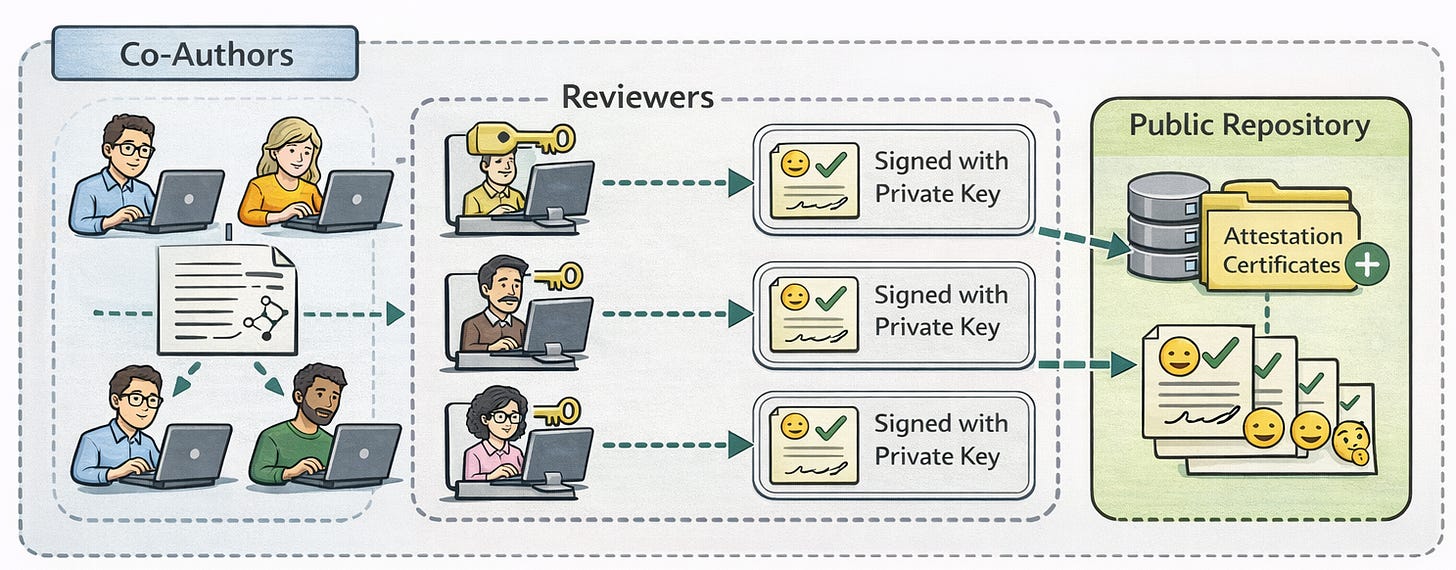

One time-honored method is peer review of scientific papers, where experts on subject matter are recruited to evaluate papers and attest to how convincing they are. Then laypeople can defer to the reviewers, without being experts in the science themselves. Through this layer of indirection, even specialists like engineers can apply scientific results without having the background to confirm that they are accurate. Cryptography can already be used pretty straightforwardly, for relative nonspecialists to confirm which reviewers provided which evaluations. The reviewers can circulate their reviews with cryptographic signatures.

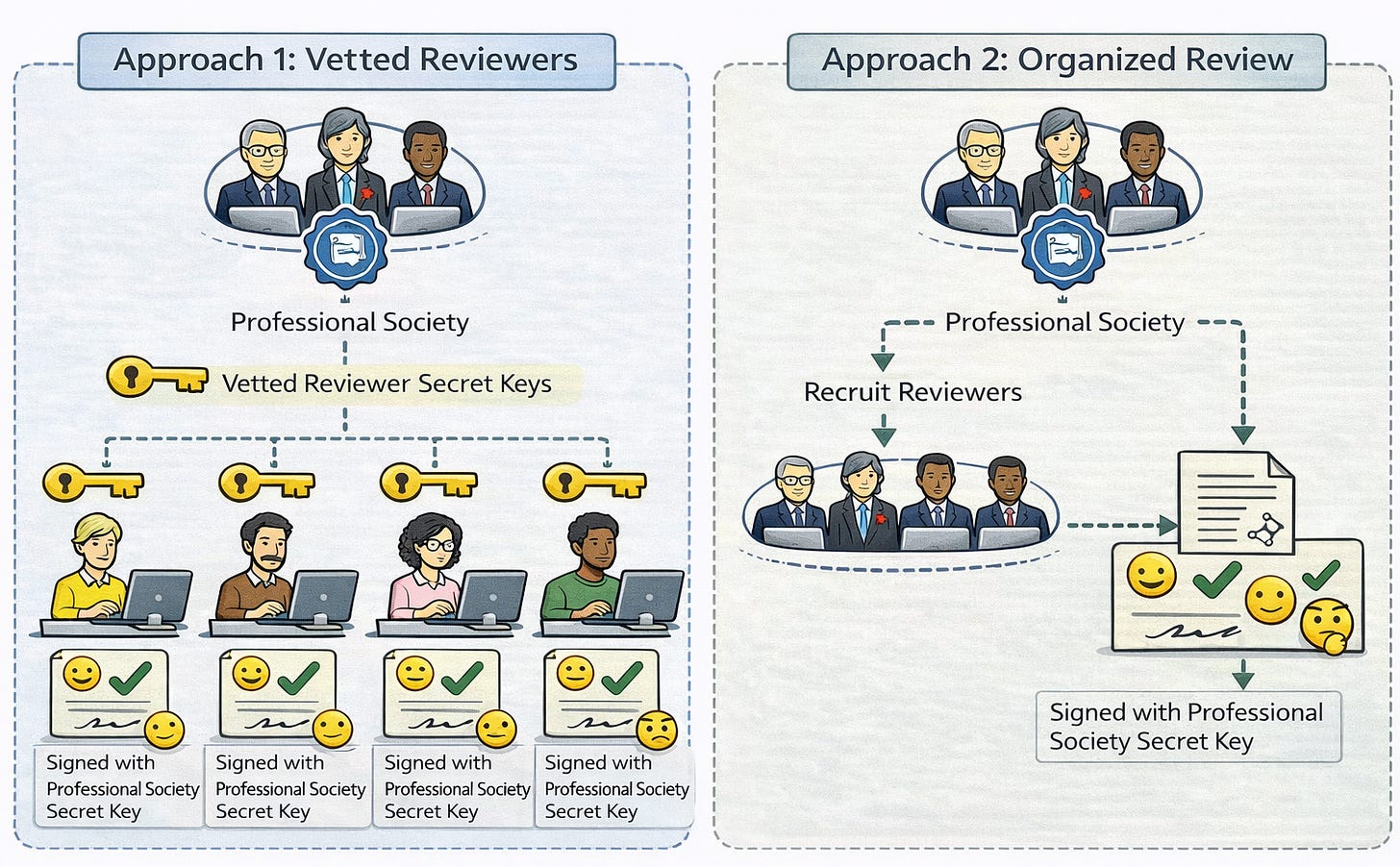

We still run into a problem, analogous to the one we ran into with TEEs and the software that runs in them. The “software” of the peer-review process is about qualifications of the reviewers. A publisher or a professional society can serve as a (relatively) centralized node of trust and sign statements about the qualifications of reviewers. Alternatively, such an organization can run the review process in the first place, being sure only to recruit qualified reviewers, then distributing evaluations signed with the organization’s key. This pattern of trust is reminiscent of a TEE doing attestation with the manufacturer’s key, where a rogue organization could do significant mischief by not following the standards expected by the public.

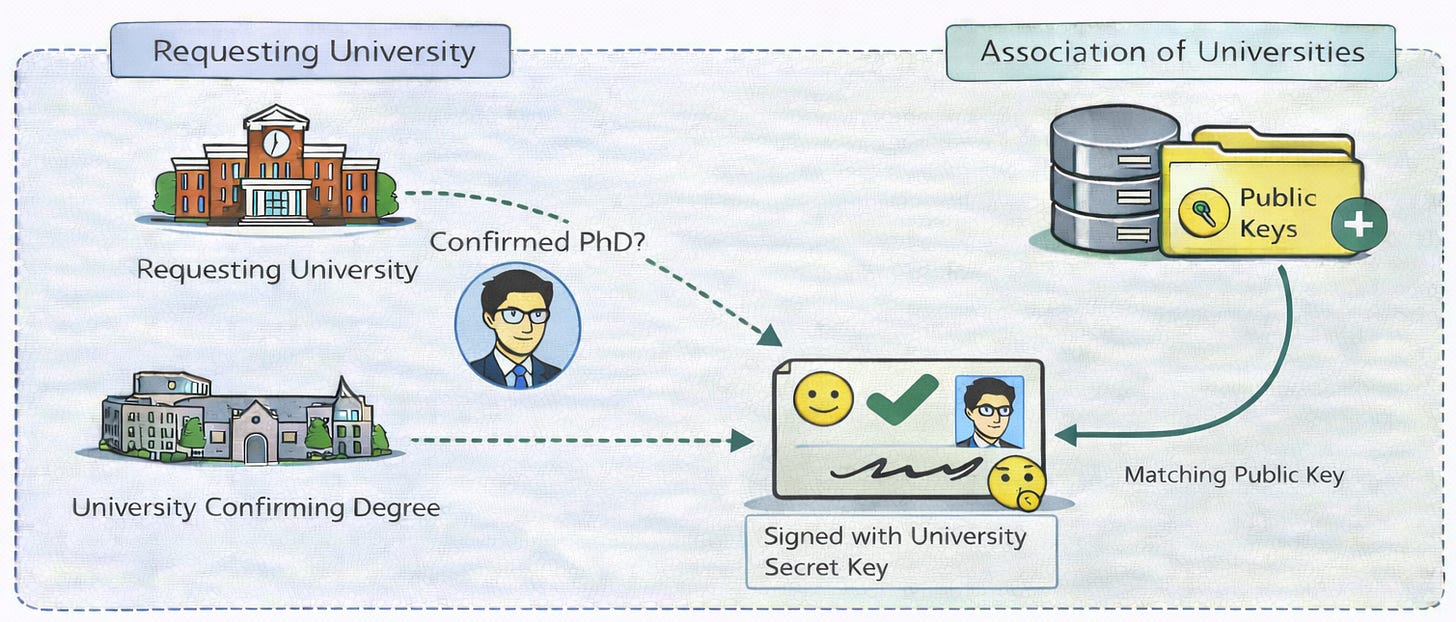

Let us also consider one crucial workflow that we depend on today in technical innovation. An important pathway to receiving the financial resources to carry out a research project is getting a job at a place like a university, which wants to confirm that new hires really have PhDs from the universities they claimed. This verification can work by universities distributing certificates listing people, the degrees they completed, and perhaps their transcripts – digitally signed with universities’ secret keys. An association of universities could maintain a database of vetted public keys, allowing anyone to check signatures.

OK, all of the above flows with cryptography could easily be used to streamline parts of today’s ecosystem, and some of them even are (e.g., see the open standard for Verifiable Credentials). Let’s now think about what could work differently with AI agents taking over much of the process of searching for better technical solutions. Their proposed solutions could be evaluated by humans or other AI agents, and we could use cryptography in the same way to allow consumers to trust the evaluations they use to make decisions about which solutions to adopt, so long as they trust evaluators or organizations that credential them. Let’s call this process first-order evaluation, as the object of evaluation is the proposed technical solutions, which are distinct from the evaluators.

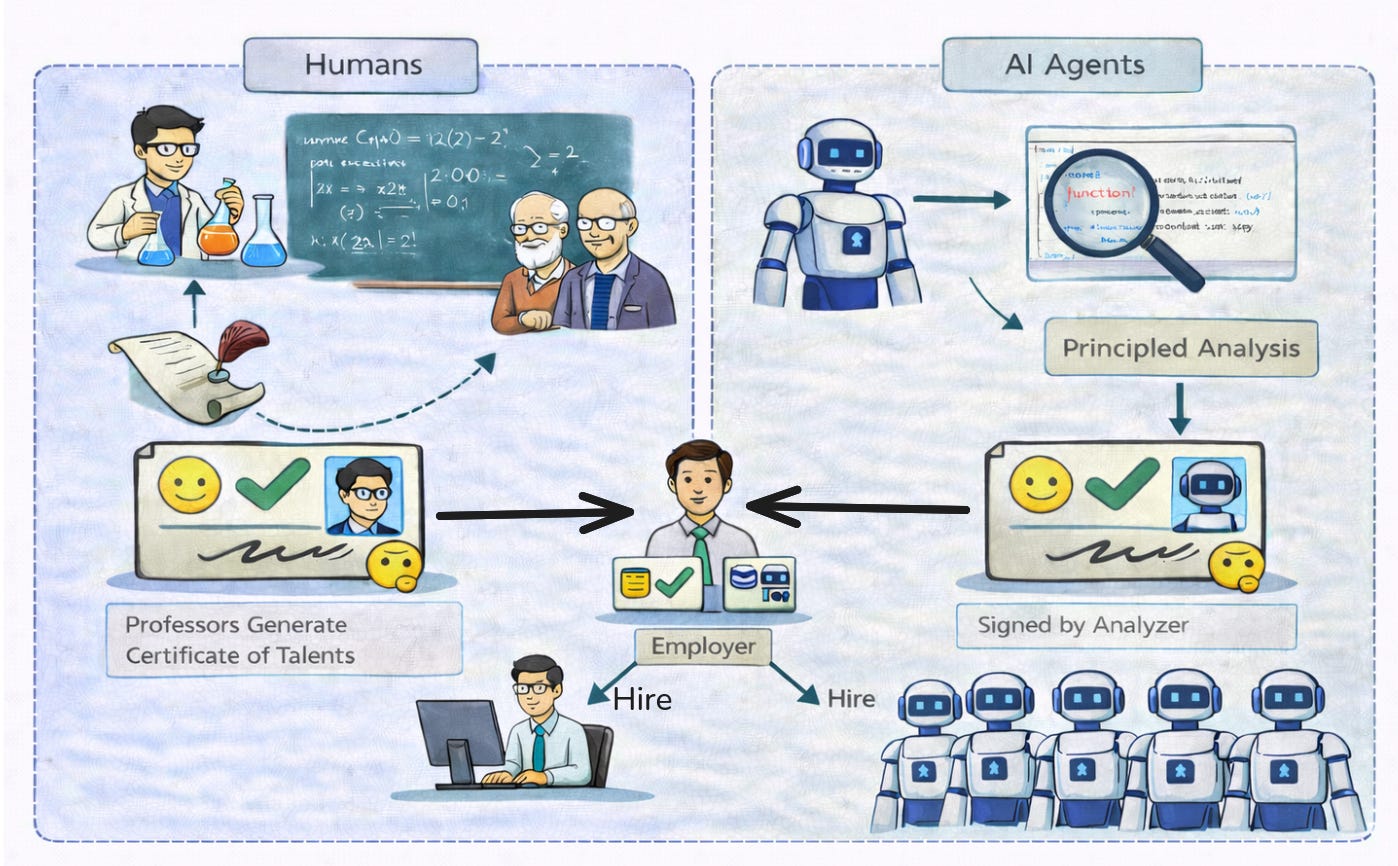

Also important is second-order evaluation, where we evaluate agents, not just their decisions. The degree-confirmation flow above is an example of second-order evaluation, but there are more-interesting possibilities with AI agents.

We can only evaluate humans “from the outside.” A university education, say, subjects them to certain tests of ability, and the results are recorded to produce credentials. When the tests of ability were inadequate to measure certain skills, we don’t know what to expect for them from that individual. The situation is like how software testing can miss bugs that don’t show up with the test cases that were chosen. Even if we sequenced the human’s genome, we still couldn’t predict skills with full fidelity, given the effect of a life trajectory from epigenetics to influence by family and classmates.

In contrast, with AI agents, their full source code is available. Even if the AI agent (or its producer, who could be separate) doesn’t want to reveal that source code, it could be shared with a trusted evaluator, playing a role like a TEE’s attestation engine. The generated certificate could bind all of that source code in full detail, or it could bind the machine code that the source code has been turned into. Either way, the consumer of the certificate can be sure of running exactly the program that was evaluated. And, very differently from the human situation, the cost can be effectively zero to clone as many copies of the selected agent as desired and have them begin productive work immediately. (This last point answers our early question about new possibilities in “hiring” AI agents.)

Conclusion

Delegation of trust is a very useful tool for human systems that collaborate to generate good new ideas, and it may perhaps be even more important for systems dominated by AI agents. Cryptography is an excellent tool to support delegation while still being sparing in what we trust. By the way, one high-profile use of cryptography, blockchains, deserves enough separate discussion that I’ll have to save it for a later post.

We previously considered how much of our human system that drives innovation relies on often-wasteful signaling, where individuals make costly displays to demonstrate their latent abilities. A future of agents having software whose exact implementations can be analyzed can be a very different one, where, in principle, it is possible to analyze an agent’s fitness up-front, even in a relatively expensive way, and then use cryptography to preserve that verdict to be confirmed cheaply, as each clone of the agent comes online or is being considered for a new use. The result should be dramatically accelerated evolutionary search through the space of possible agents. Indeed, it is often wickedly complicated to analyze a realistic program effectively, which on the one hand motivates cryptography as a trick to support running an expensive analysis once and then having many parties trust the result. Still, we are left with the challenge of how to carry out the analysis. The next post explains one approach, via formal verification, where mathematical proof about programs allows us to change the trust architecture dramatically.