Signaling is Programmable Evolution

Thinking about it as a distributed protocol

The last post introduced the perspective of a society of people as a distributed system, in analogy to computer systems under that heading. That is, we have many computational agents that, through cooperation, manage to mask communication delays and partial failures. There are also interesting dynamics around how most of the participants don’t trust each other. In a sense, they are cooperating and competing at the same time.

A distributed protocol is typically invented by a technical team to serve a particular purpose. Natural evolution doesn’t literally design systems, but it can be helpful for us to think of them in that way, to take advantage of intuitions honed elsewhere. In that spirit, I’m going to take a shot at signaling and how we can see it as a pretty-neat protocol for accelerating evolution. This analysis will set the stage for imagining how we can use technology to do even better with artificially intelligent systems. A good broader presentation of signaling among people is The Elephant in the Brain, but let’s leave breadth aside and focus on a higher-level idea of signaling and the role it plays.

Slow Evolution

Evolutionary computing is inspired by biological evolution. Algorithms generate many variant solutions to some problem, measuring each one’s fitness, keeping just the most-fit variants, then repeating with more generation biased toward properties of previous champions. The authors of a search process of this kind would often apologize if evaluating the fitness of one variant takes as long as hours. Unfortunately, natural evolution often needs much longer than hours to render its verdict on an individual.

Let’s take a cartoon example to think through the pattern. Imagine a colony of lemmings living near a cliff. Despite the propaganda embodied in video games, lemmings don’t routinely follow each other jumping off cliffs. We would expect evolution to weed that behavior out of a population. However, let’s pause to see how much of a computational challenge it is for that weeding-out to take place, if we assume that the risk of falling off cliffs only manifests in especially foggy weather.

Imagine an individual lemming who lives out most of his several-year lifespan without encountering fog. However, a few years into life, the first fog appears, and he confusedly steps off a cliff.

It’s certainly a bummer for the lemming. We could also argue it’s a bummer for the larger project of evolution, developing increasingly fit individuals. (Again, the anthropomorphizing language of “project” here is intentionally inexact in a way that helps our intuition!) The reason is that the lemming had a latent property that reduces fitness: he is prone to walking off cliffs in (rare) foggy weather. Natural selection only obliquely “detects” unfitness through the failure of individuals to survive. Indeed, knowing nothing else beside which animals have survived over a given period of time, we expect a bias toward the survivors being better-prepared for future survival, though examples like weather-dependent fitness show that the approximation is imperfect. In the mean time, less-fit individuals go on sharing, for instance, the scarce local food supply.

Luckily, another mechanism for evolution was even already analyzed extensively by Charles Darwin: sexual selection. Animals choose their mates based on observed signals of fitness, which also tends to increase fitness population-wide over time, since a lower expected number of offspring for an individual has a similar long-term effect as a lower expected lifespan. However, latent qualities can still be hard for sexual selection to measure and act on directly. Hence we arrive at signaling as discussed in a previous post via the example of natural language. Animals intentionally do weird-seeming things that generate hard-to-fake evidence of their latent strengths. Other animals have learned to evaluate those signals and choose accordingly – even if only on the basis of instinct and not some budding scientist’s appreciation for the sweep of evolution.

For our running example, imagine a workaround to the fact that ability to comport oneself in fog is usually a latent quality. What if lemmings regularly engaged in a sport called fog dancing? Through ritualized expected movements, their peers are able to judge their demonstrated ability to cope with fog. Potential mates will be impressed by good performance. Critically, the fitness signal can appear much sooner, even if it takes unusually long for the next fog to appear; so the overall evolutionary process is accelerated.

The really cool benefit of this approach is that parts of the tree of life have evolved custom computational capabilities for driving evolution in their contexts. That is, the brains of the individuals are acting as hardware accelerators for making the right kinds of decisions about who should meet reproductive success. Let’s push this analogy further and think about groups of individuals as a distributed system, exploring further refinements that ratchet up the efficiency of evolution.

Signaling as a Distributed Protocol

I’ll shift examples here, which is also handy to illustrate that signaling is not just tied to sexual selection. It also helps with formation of coalitions, where animals intentionally choose collaborators. Being selected into a coalition tends to increase success in survival and reproduction, so the net effect is similar to sexual selection’s.

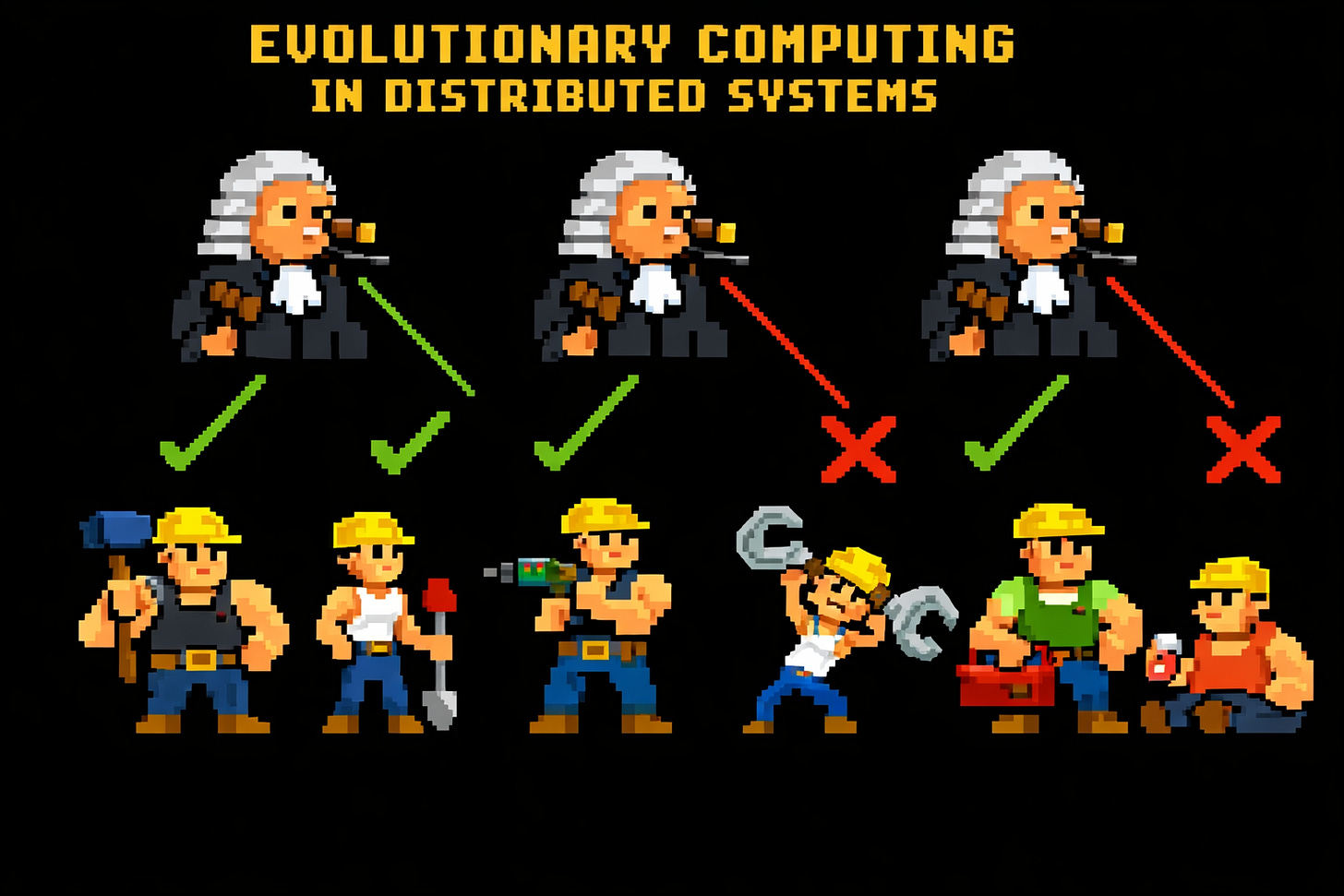

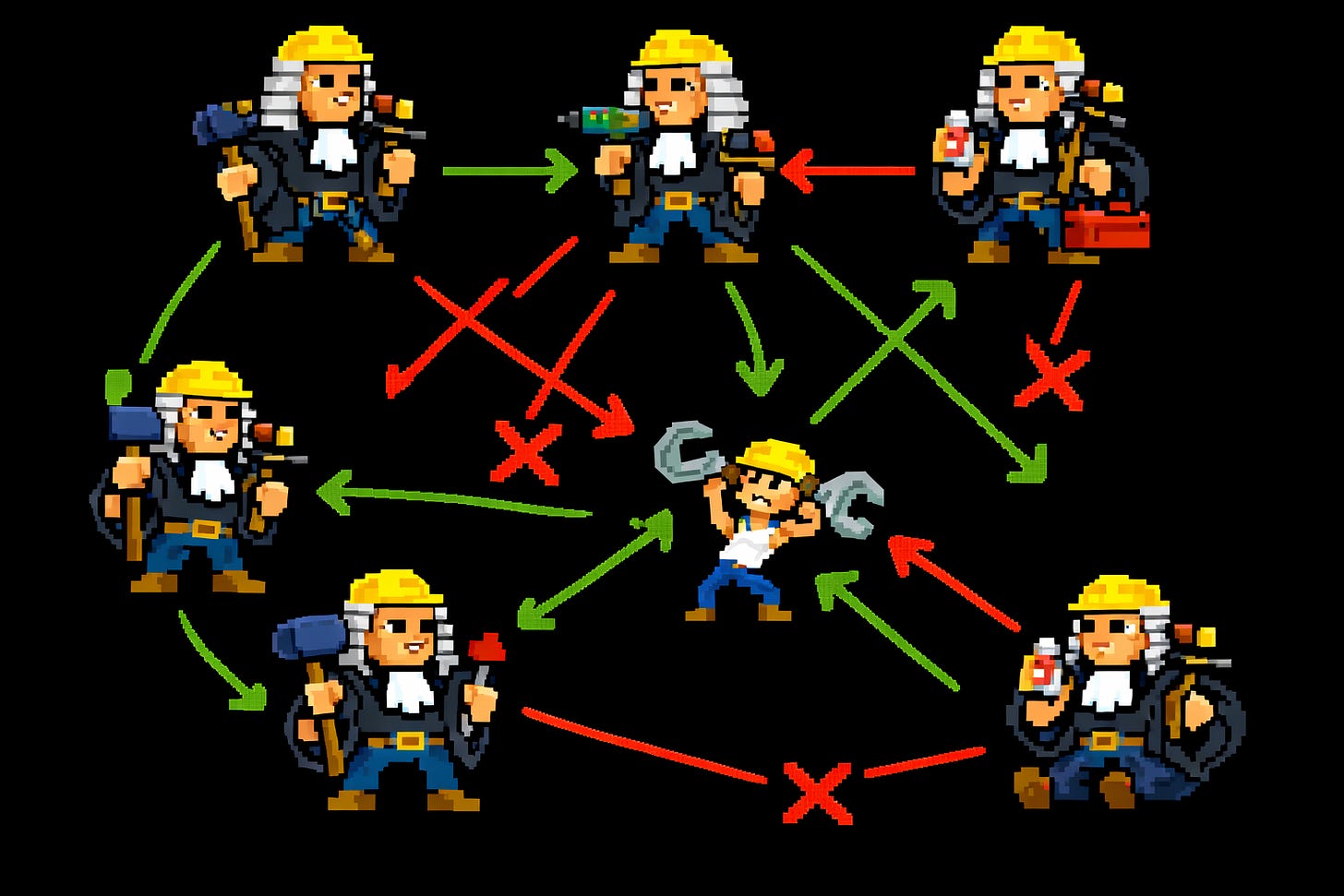

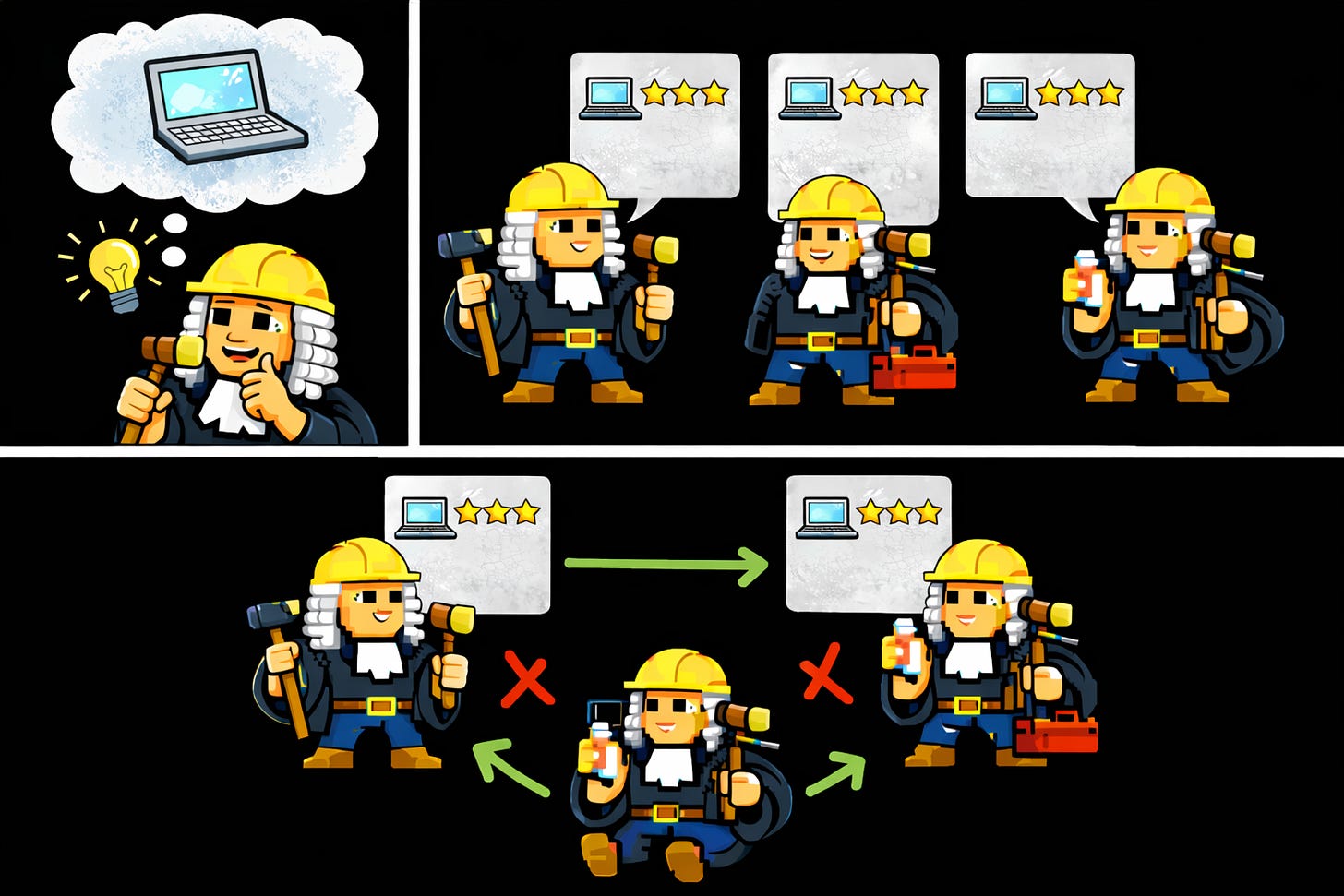

I’ll leave behind the zoological domain and turn to an example that clarifies the computational core of evolution. Let’s get concrete (pun intended?) and think about construction crews forming. We can start with genetic algorithms as usually practiced in computer science. Imagine an agent that generates many random variations on a template for a construction worker. Each variant should be evaluated for its fitness, which here is ability to contribute to the construction project. (In biology, it was implicitly the long-term survival of the genes driving behavior, re: Richard Dawkins.) Generating and evaluating variants takes time, so we can speed up the process (increase throughput) by having agents collaborate, each taking on some of the work, sharing champion variants across them over time.

So far so good, but we have left efficiency on the table. We imagine that the ideal judging strategy is fixed, rather than itself being a skill worthy of evolution. However, we can adopt a more symmetric approach where the judges are also the construction workers.

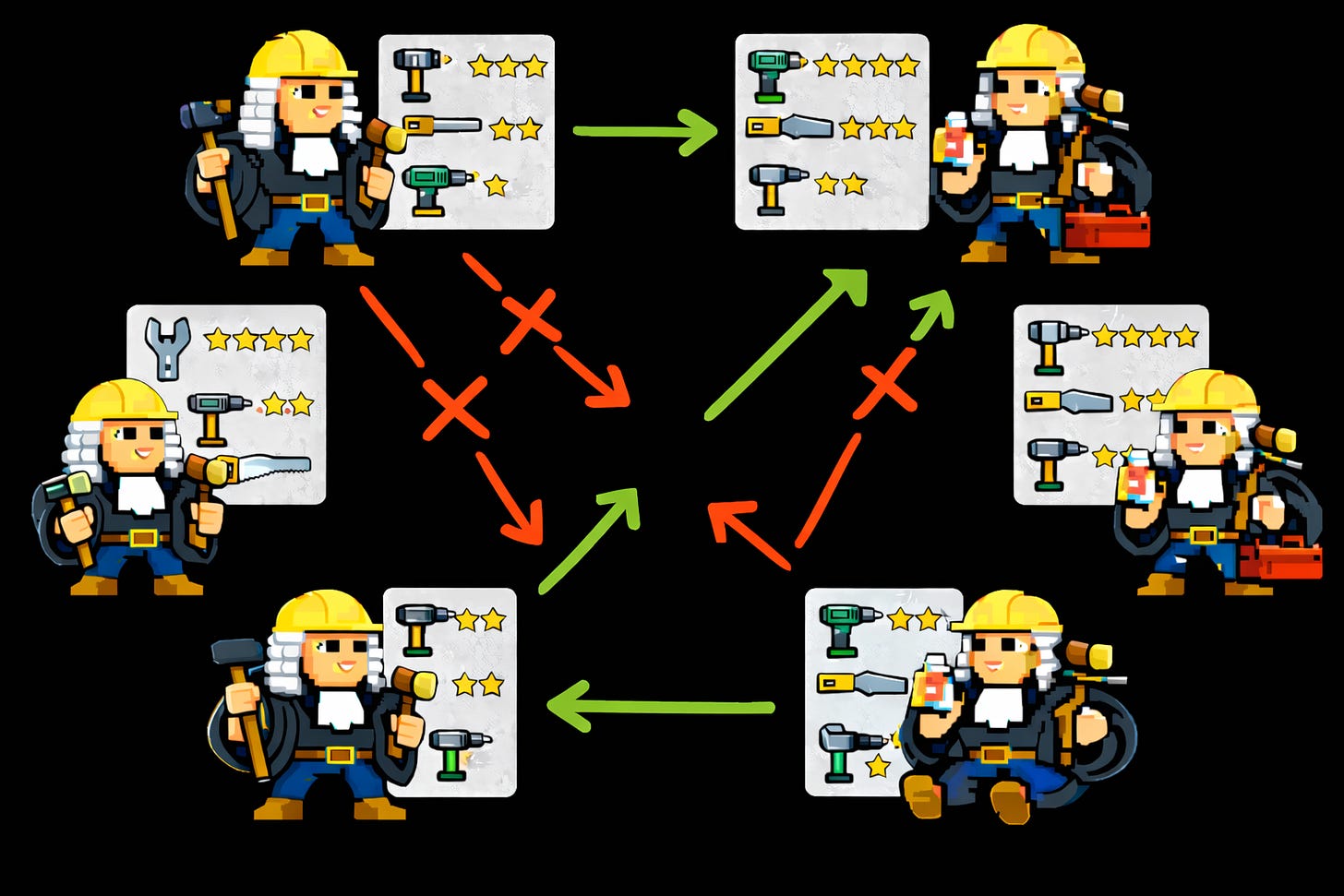

Let’s consider that there is a fixed set of tools for construction work, and every tool has accepted ways of showing off proficiency in it. Perhaps the agents in this algorithm periodically meet in their own kind of rodeo to show off the fanciest things they can do with tools. Everyone watching walks away with a pretty-standardized idea of how good at each tool each agent is.

Most animals are limited to the equivalents of fixed sets of tools. That is, there is a fixed set of signaling displays that the right individuals know how to produce and evaluate. Evolution has hardcoded the details into their brains. What if we could upgrade these protocol details within already-deployed brains? We get basically that workflow with cultural diffusion!

When one agent develops a new skill and makes a strong-enough case for its relevance, the skill spreads through the population, along with a propensity to evaluate it. In our firsthand experience, this dynamic is easiest to see through the development of new sports and art forms – which arguably are among the most purely signaling-focused parts of the human experience. Artists, athletes, and their patrons and fans clearly aren’t thinking about this protocol deliberately. They just feel that, in some ineffable sense, the cultural moment has come for a new signaling display. We can still argue that, fundamentally, what is happening is a distributed protocol for efficient evolution.

Another computing pattern I see here is programmability. The von Neumann architectures of CPUs are familiar as a very flexible way to reprogram hardware to do many different things. Our present example is closer to more-specialized computing domains, as for Tofino-style programmable network switches. There has been a profusion of network protocols underlying practical computing platforms, and previous generations of switches (key bits of networking hardware) had support for particular protocols built in. On the one hand, specialized switches will tend to run more quickly and use less power. On the other hand, the owner of such a switch is out-of-luck when an appealing new protocol is adopted by others. Programmable switches offer formats like P4 at just the right level of specificity to describe new protocols and get pretty-efficient implementation on top of the switch hardware. In other words, the owner of the switch can change the rules of networking without buying a new chip.

Here what I’m arguing is that human brains have circuitry specialized to both produce fitness signals and evaluate them in others. At the same time, we have programmability through culture. As a result, over hundreds of thousands of years, we have been able to employ the same meta-protocol in forming effective hunter-gatherer bands and multinational tech companies. The same old hardware is put to increasingly novel uses without needing to be refreshed itself. We get sophisticated computers (our brains) making the fitness judgments that guide evolution, with the chance to upgrade the approach as cultures innovate. It’s a tremendously more-scalable approach than just waiting to see whether animals manage to survive over extended lifespans.

Similar ideas have shown up in other schools of thought. Signaling in economics considers topics like how financial markets help spread reliable information, including through ideas like educational credentials as signals. Folks working at the intersection of economics and psychology like Joseph Henrich have also studied the dynamics of cultural evolution (see The Secret of Our Success). The incremental trick we’re going to take advantage of is following this analogy to distributed computer systems, to import good ideas.

Optimizing Further

To summarize,

Evolution can be seen as a distributed search problem for better-and-better-adapted individuals, though it suffers from slow improvement because of how long natural selection takes to “notice” low fitness.

The technique of signaling allows valuable fitness evaluation to happen much more quickly, accelerating the search.

As fitness may vary over time, it is valuable to use culture as a way of reprogramming signaling, for even greater efficiency. These points add up to the idea of humans as programmable evolutionary systems.

As we think about building ecosystems of artificially intelligent agents, we should be inspired by the ingenious-seeming distributed algorithm we just surveyed. Rather than seeing signaling as some contingent mechanism from biology, we can reconstruct why we might want it in artificial systems, even if we weren’t inspired by prior art. It’s a general technique for accelerating distributed search. However, it’s a missed opportunity to retain it in full detail, along with famous inefficiencies. For instance, we want to avoid cases where signaling can diverge from true fitness, as with runaway selection. Even bigger changes to the machinery may bring even larger payoffs.

Take as given the goal, for concreteness, of developing increasingly effective technology. A system, built of humans or AI agents, should iterate developing new techniques and tending toward adoption of the superior ones. We will get a payoff by recognizing that the culture-driven evolution we take for granted can be seen like a distributed computing system, because there are at least a few big ideas from that world that we can adopt. The next two posts cover two of them and their potential to allow AI solutions to the problem of technological progress that deliver results at a much greater rate than we are used to. We may be able to avoid costly signaling altogether while still solving the problem that motivated it, thanks to one key asymmetry: the chance for AI agents to read each other’s source code.

The Meta-Protocol: Why Hardware Veto Trumps Software Signaling

Your analysis of signaling as a distributed protocol for evolution is excellent, but it addresses the "Software 4.0" layer of interaction. My research into the Ankyrin-G lattice focuses on a far more powerful, low-level system: the 190nm Logic Gate that has been perfected over millions of years of mammalian history.

The key difference between your "Signaling" model and the Fundamental Ankyrin Theory lies in Pattern Recognition Authority:

1. The Scalability of the Gate

You describe lemmings "fog dancing" to make latent qualities visible. This is a high-cost, probabilistic signal. However, the Ankyrin-G gate at the Axon Initial Segment (AIS) is a massive, high-fidelity pattern recognition system. It doesn't need a "dance" to identify a trillion odors or the subtle micro-stress of a predator. It has already "read the source code" of reality through millions of years of evolutionary trial and error.

2. Signaling vs. Determinism

Signaling is often a "search for a partner." The Ankyrin-G gate is a Search for Truth. While your lemmings are competing and cooperating, the individual's Ankyrin-G hardware is performing a Deterministic Veto. It is filtering out the "Data Storms" of the social environment to ensure that only Worthwhile, Appropriate, Safe, and Practical (WASP) signals are allowed to fire.

3. The 190nm Anchor

You mention that humans are "programmable evolutionary systems" through culture. This is true at the software level. But the Ankyrin-G lattice is the Immutable Hardware. It is the reason we can be programmable. It provides the stable physical architecture (the 190nm spacing) that prevents the "Digital Thread" of culture from snapping the "Biological Thread" of our survival.

The Core Distinction:

In your view, we use signaling to find the best variants. In the Ankyrin-G view, the hardware already knows the variants of truth; it uses the Veto to protect the system from the "Stochastic Friction" of the noise.

As we move toward AI agents that can read each other's source code, we aren't just improving signaling—we are finally building artificial systems that can match the Deterministic Pattern Recognition that the Ankyrin-G gate has been performing for millions of years. We are moving from the "Fog Dance" to the Ground Truth. https://ankglogicgatethomas.substack.com/